AlgorithmWatch is a human rights organization based in Zurich and Berlin. We fight for a world where algorithms and Artificial Intelligence (AI) do not weaken justice, democracy, and sustainability, but strengthen them.

Journalistic stories

How does automated decision-making effect our daily lives? Where are the systems applied and what happens when something goes wrong? Read our journalistic investigations on the current use of ADM systems and their consequences. Read our stories

Projects

Our research projects take a specific look at automated decision-making in certain sectors. You can also get involved! Learn more about our projects

Publications

Read our comprehensive reports, analyses and working papers on the impact and ethical questions of algorithmic decision-making, written in collaboration with our network of researchers and civil society experts. See our publications

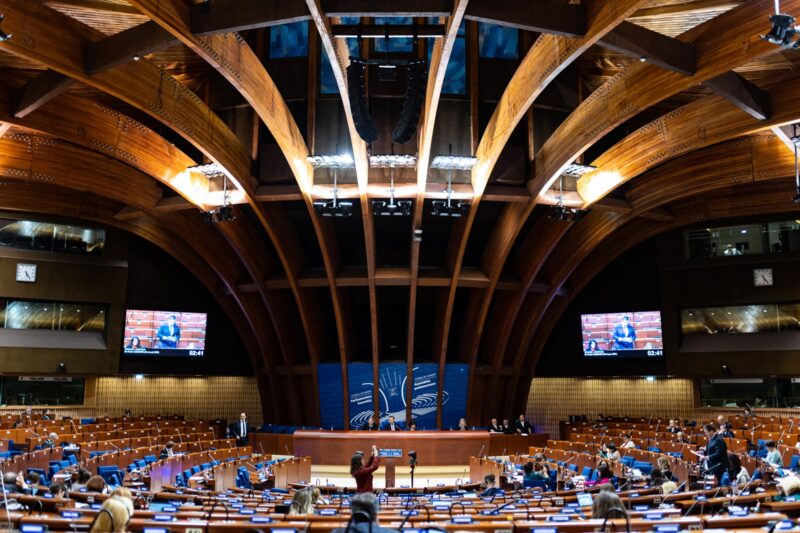

Positions

Find here our positions on current policy and regulatory processes concerning algorithmic decision-making systems and online platforms. Our positions

Blog

Current events, campaigns and news about our team - here you can find updates from AlgorithmWatch Switzerland.